The Basics

What people usually mean when they say "AI"

When people talk about AI tools for research, they are almost always talking about one specific kind of program: a large language model, or LLM. You type something in plain text, maybe upload a file, the model reads it, and it writes a response back. These models work because they were trained on a massive amount of text: books, research papers, code, documentation, web pages. The result is a program that can summarize a paper, explain an equation, draft an email, or answer a question about almost any topic, all through the same text interface. It is not always right, and it works better in some areas than others, but for a surprising range of tasks it is genuinely helpful.

The easiest way to try one is to open a browser (Safari, Google Chrome, Firefox, etc.) and go to a site like ChatGPT.com, Claude.ai, or Gemini.google.com. You do not need to install anything. You type a question, hit enter, and read what comes back. That is where we start.

What AI can already do for you

AI in your browser is a strong first stop for literature review and reading papers. You can hand it a PDF and ask for the central claims, the methodology, the assumptions, and the gaps. It is also very good at one-shot questions: define this term, explain this equation, summarize this section, tell me why this approximation is valid.

Anything that is text in, text out, these models handle well. Need a document translated? It can do that near-perfectly in dozens of languages. Need a ten-page report condensed into a paragraph? A rough draft rewritten in a different tone? Two competing explanations compared side by side? All of that now works reliably.

If you are stuck on something and just need a quick, clear explanation, opening ChatGPT or Claude and asking is often faster than searching through documentation or the internet. I often use ChatGPT to find me deals on flights. It is not a search engine, but for a lot of tasks it gets you to the answer faster than one.

But aren't LLMs just limited to what they read in training? If I'm working on a new problem, they won't be able to help, right?

No. These models do not just copy and paste from their training data. They learned patterns and relationships across that data, which means they can generalize. If you describe a new problem clearly, the model can reason about it using everything it has seen about similar problems. It will not always get it right, but it can often point you in a useful direction, suggest approaches you had not considered, or catch mistakes in your reasoning. The key is giving it enough context about your specific situation.

To get a feel for what these models can do, try feeding one a recent paper. Upload a PDF or paste an arXiv link into ChatGPT (Thinking mode) and ask:

"Are there any subtleties that aren't thoroughly addressed in this PDF?"

AI can teach you about itself

This is maybe the most important difference between AI and previous technological breakthroughs: it can explain itself. If you get confused at any point in this tutorial, or later when you are using these tools on your own, you can just ask the model for help. If you are on your computer and feel lost, take a screenshot, upload it to the chat, and ask it where to click. The model will read the image and walk you through it.

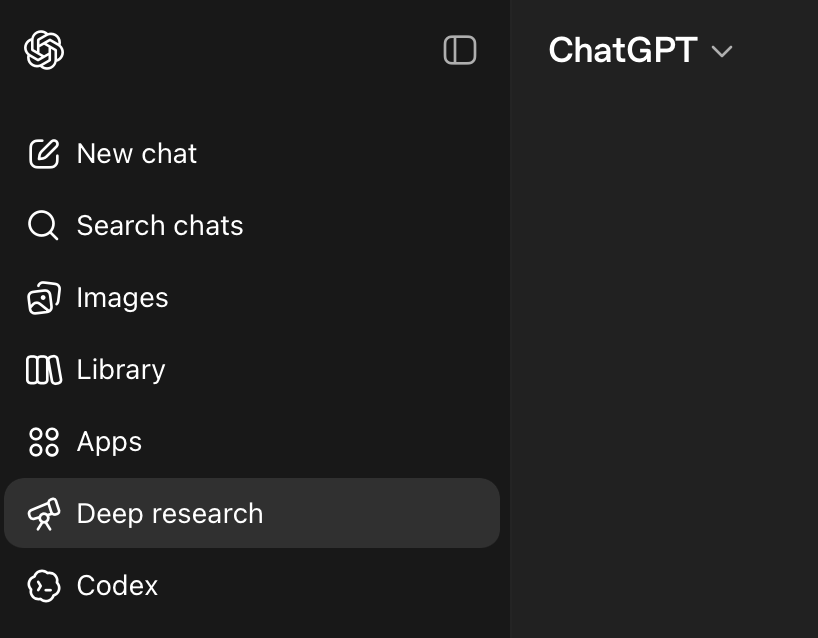

Deep research

Some of these platforms also offer a mode that goes beyond a single response. ChatGPT's Deep Research, for example, will take a question and spend around 30 minutes on it: reading sources, following citations, cross-referencing claims, and assembling a structured report with references. It is probably the most comprehensive 30 minute literature search that has ever existed. It is not perfect, and you should still check its citations, but as a starting point it can save you hours of work.

How these models actually work

A language model predicts the next piece of text based on patterns it learned during training. Its answer is shaped by two things: the broad knowledge it absorbed from training data, and the specific text you put in front of it right now. That second part is called the context window. If the important material is missing from the context, or if the context is so full that the important material gets buried, the answer gets worse. Training gives the model general knowledge; context gives it the specifics of your problem. The cleaner your context, the better the answer. More on this later.

Why some modes "think" longer

"Thinking" models take longer to respond because they use a technique called chain-of-thought reasoning: before giving you a final answer, the model works through the problem step by step internally, checking its own logic along the way. This is slower, but for harder problems it produces significantly better results. The fast modes skip this step, which is fine for simple tasks but means they are more likely to make mistakes on anything that requires real reasoning. Thinking modes are also more expensive to run, which is why platforms meter them separately or limit how often you can use them.

So where does this break down?

Everything above works for one-off questions. The problems start when you try to do real, sustained work. You will find yourself re-uploading the same papers, re-explaining the same context, and losing your conversation history every time you start a new chat. The next section walks through the main AI platforms and what each one costs, so you can pick the right one before you go any further.